Background

Let's say you’re an administrator for a large company that has a substantial Office365 tenant that consist of many SharePoint sites (e.g. more than 20000). And you want to run some kind of monitoring or maintenance script on all those sites. Of course you want to use PnP PowerShell for that.

When you've developed the script you notice processing each site takes a fair amount of time (e.g. more than a couple of seconds). Combine this with the large number of sites and your script needs a considerable amount of time to complete. You perhaps wants to run it on a schedule and not on your own machine either. You then have the option to run it on a local server or in Azure Automation.

The local server may have some disadvantages related to network connectivity etc. That can happen quite often in large enterprises.

Azure Automation is a joy to work with but in this case it also has a downside.

Azure automation scripts are constricted to a maximum run time of three hours

See Automation runbook execution. If a script runs longer than three hours you will run into the error

The job was evicted and subsequently reached a Stopped state. The job cannot continue running.

Solution

Basically any script that iterates over a collection could be done in a batched way. This means you only process a part of the whole collection in one go. By adjusting your script slightly and using Microsoft Flow/Power Automate to call the Automation Job you can reduce the run time per batch to stay under three hours.

The collection in my case was the total number of site collections in the tenant. By doing 19 batches of a 1000 I could process all sites.

Here's the general procedure:

Update the script so it handles a batch instead of the whole collection.

Create a Flow that takes the total number of items (site collections) and a batchsize and the calls the Automation runbook a certain number of times (the batch count).

In this way you can keep running automation jobs in batches until everything is done, as long as each batch takes less than three hours. This is one way to solve this constraint of Azure Automation in an straightforward way.

Flow

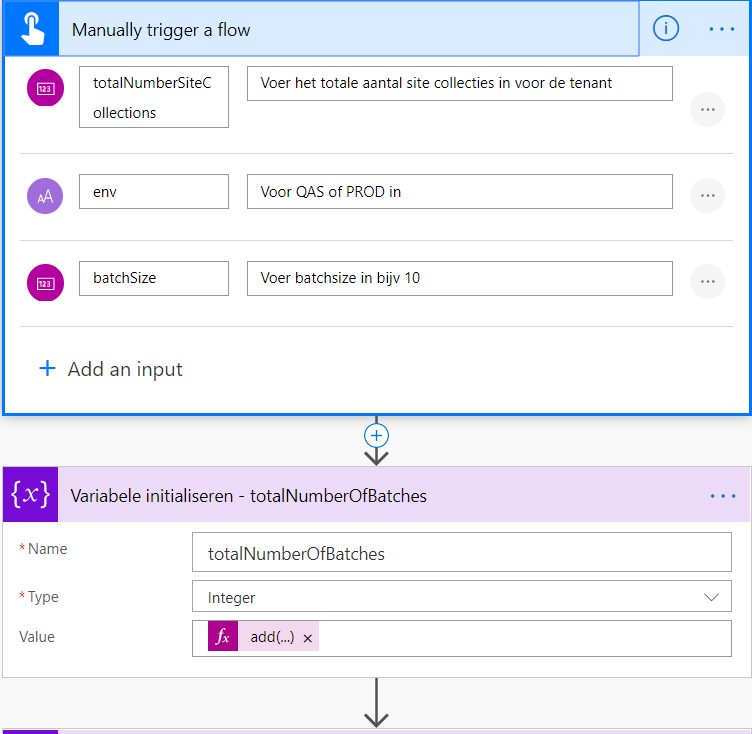

Start of Flow - Manual Flow trigger

Start of Flow - Manual Flow trigger

With below expression I calculate the number of batches to do, this is done via integer division. Divide the total number of site collections you want to process by the batchsize. The total number of sites will have to be guessed or calculated through some other way, the trick is to estimate a larger value than the real value otherwise you wont process all sites.

add(div(triggerBody()['number'],triggerBody()['number_1']),1)

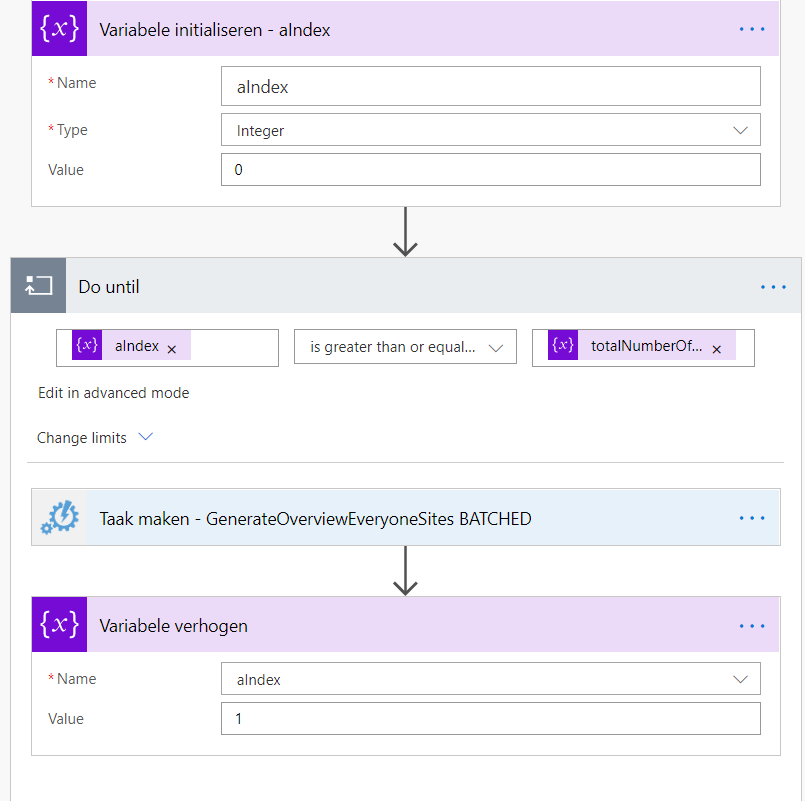

Flow continued - loop

Flow continued - loop

Run the flow until the index has been increased until greater than or equal to the total number of batches we calculated.

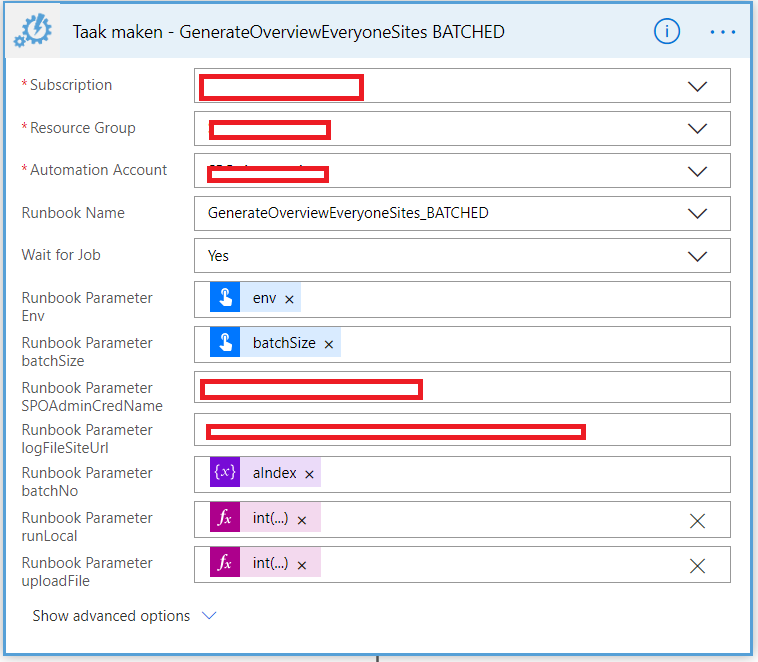

Flow continued - Run Azure Automation action

Flow continued - Run Azure Automation action

Here you see the Azure Automation action. This is a premium connector by the way. In case you use boolean parameters in you runbook then you need to perform the following trick.

Instead of selecting Yes or No, use a manual parameter then use below expressions for False or True

- int('0') : False

- int('1') : True

Azure Automation PowerShell runbook

Here's part of a PowerShell script that you could setup to run in a batched way. Notice the parameters $batchNo (batch number) and $batchSize

In the code I get all site collections and the tenant and then using the -skip and -first actions of the select command I get the set of site collections to do in this batch.

Where it says 'PROCESS SITE HERE' you can add your code to process a single site.

param (

[Parameter(Mandatory = $true)]

[ValidateSet("QAS","PROD")]

[string]

$env,

[Parameter(Mandatory=$true)][string]$logFileSiteUrl,

[Parameter(Mandatory=$false)][string]$SPOAdminCredName,

[Parameter(Mandatory=$true)][int]$batchNo = 0,

[Parameter(Mandatory=$true)][int]$batchSize = 10,

[Parameter(Mandatory=$true)][bool]$uploadFile,

[Parameter(Mandatory=$true)][bool]$runLocal

)

$spoAdminCred = Get-AutomationPSCredential -Name $SPOAdminCredName;

$conn = Connect-PnPOnline -Url $tenantUrl -Credentials $spoAdminCred;

$allSiteCollections = Get-PnPTenantSite;

$totalNumberOfSiteCollections = $siteCollections.Length;

Write-Output "Total number of site collections: $totalNumberOfSiteCollections";

$startIndex = ($batchNo * $batchSize);

$siteCollections = $allSiteCollections | select -Skip $startIndex;

$siteCollections = $siteCollections | select -First $batchSize;

$numberOfSiteCollections = $siteCollections.Length;

Write-Output "Starting from site collection number $startIndex with batchsize $batchSize";

if ($siteCollections -and $siteCollections.Length -gt 0)

{

$aIndex = 0;

foreach ($aSiteCollection in $siteCollections)

{

Write-Output "Starting with site number $aIndex of $numberOfSiteCollections";

#PROCESS SITE HERE

$aIndex++;

}

if ($uploadFile)

{

Write-Output "Uploading $logPath to $logFileSiteUrl";

UploadLog -siteLocation $logFileSiteUrl;

}

else

{

Write-Output "Uploading file $logPath is NOT enabled.";

}

}